Just as with input parameters, in order to be reusable, it should be obvious that a pipeline will need to return details of the activities performed so that subsequent pipelines or other activities can take place. In this example, the dynamic expression for Items of the ForEach PackageName activity uses the PackageNames parameter as CSV string to iterate over the values provided: will be more samples in the Real-World Examples section that follows. For these cases, the pipeline description should detail this and/or other documentation should accompany the pipeline so that users understand how is is to be used. For example, a String parameter may be a comma-separated-value (CSV) which will be handled by an expression. The use of expressions introduces a dependency on the content and format of parameter values beyond simple being required or optional. These expressions can prepare the data for activities within the pipeline. Part of the rules of a pipeline can include expressions that process the parameter values provided when run. Each parameter can also include a Default Value, making a specific parameter value optional when the pipeline is run.

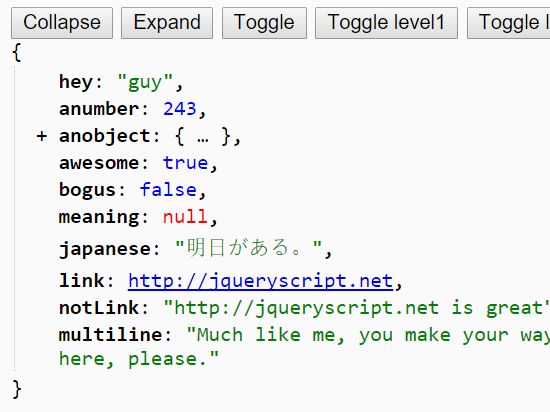

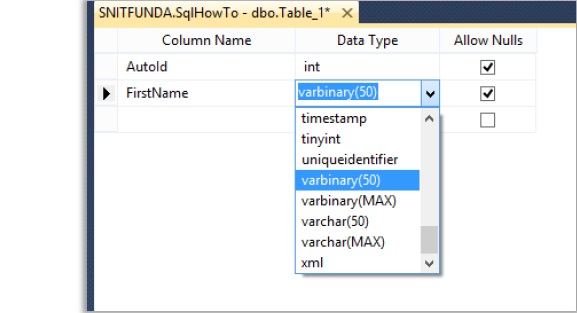

Parameters can be different types: String, Int, Float, Bool, Array, Object, and SecureString. In order to be reusable, it should be obvious that a pipeline will need to allow for input parameters so that each invocation can perform the function as required. ADF pipelines include propertied for Name (required) and Description which can be used to provide for basic documentation of the pipeline to communicate with it’s consumers.

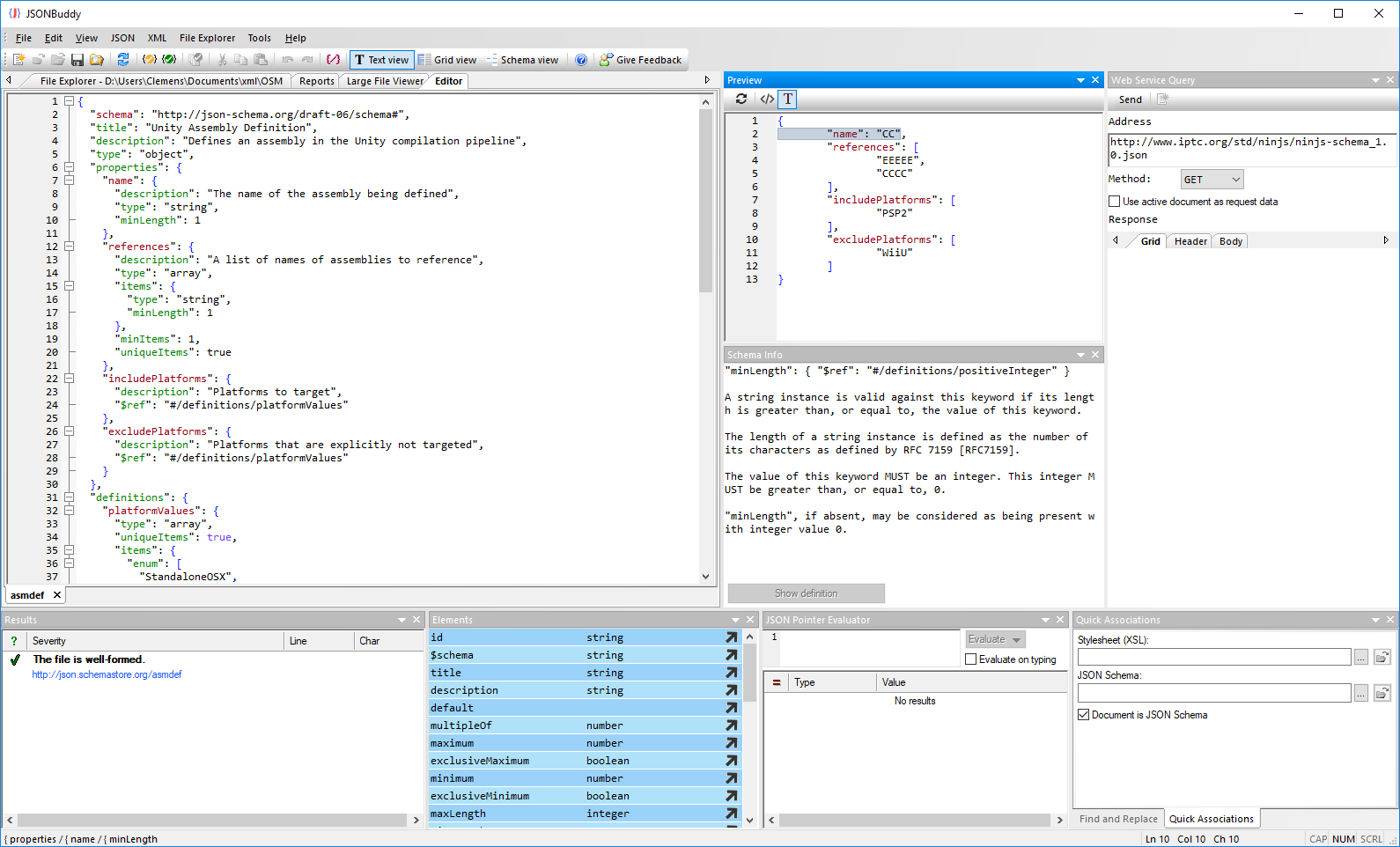

It’s logic, rules and operations will be a “black box” to users of the pipeline, requiring only knowledge of “what” is does rather than “how” it does. Just like code, a pipeline should be focused on a specific function and be self-contained. Utilized Functions and JSON data to pass messages.However, there are some things about pipelines to take into account in order to do so: The same approach can be taken with pipelines. This helps in countless ways like testing, debugging and making incremental changes. As a result, software best practices promote refactoring procedures into smaller pieces of functionality. This is similar to any procedure in code, the longer it gets the ability to edit, read, understand becomes harder and harder. Pipelines are composed of activities, as the number of activities and conditions increases, the difficulty in maintaining the pipeline increases as well. In this post, I share some lessons and practices to help make them more modular to improve reuse and manageability. Azure Data Factory (ADF) pipelines are powerful and can be complex.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed